What is human reliability?

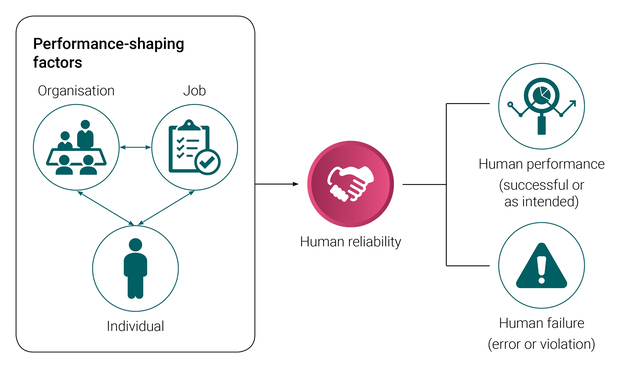

Human reliability refers to the likelihood of successful human performance within specified timeframes and environmental conditions. It is critical to overall system reliability and is one factor that contributes to, or prevents, unwanted events occurring.

Management of human reliability

Managing human reliability involves increasing the likelihood of achieving desired performance outcomes and reducing the likelihood of human error. There are multiple factors that contribute to the likelihood of error or ‘shape’ human performance. These are referred to as performance-shaping factors and can be categorised as:

- job-related – e.g. difficulty or complexity of tasks, time available, physical work environment

- individual-related – e.g. physical capability and condition, stress, motivation

- organisation-related – e.g. clarity of roles and responsibilities, level of supervision, workplace culture.

Optimising the job, individual and organisational characteristics that influence human performance is key to improving reliability and reducing human failure.

Human failure

Human failure is not random. Understanding why failure occurs and the contributing factors will help identify more effective controls to prevent reoccurrence. There are two types of human failure: errors and violations.

Human error is a result of an unintentionally inappropriate or undesirable human behaviour. It is common for unwanted events in industry to be attributed to human error when the desired performance outcome is not achieved.

While it is known that human error often plays a part in incidents, it is not enough to identify that somebody made an error, or to blame the people involved. This doesn’t provide the organisation with an opportunity to identify the latent hazards (e.g. within systems and procedures, or accepted deviations and common practices) that contributed to the event.

Violations – or non-compliances – are deliberate actions, usually in an attempt to solve problems, not cause them. They are not simply malicious behaviours. Most can be explained as entirely rational responses to the situation a worker was presented with.

In attributing the unwanted outcome entirely to human error, the organisation loses the opportunity to learn from the incident and prevent its reoccurrence. It can also perpetuate the belief that human error is a hazard in and of itself, rather than recognising human error for what it really is: a factor that can compromise or defeat safety controls or an escalation factor.

If we focus on the human error as the main contributing factor, all of the typical actions taken to prevent reoccurrence – like training, discipline and reminders – will tend to focus on the individual. These types of actions are categorised as ‘administrative’. They are not effective ways of addressing all types of error and are low on the hierarchy of control.

Types

There are many types of human error and they each have different causes. It is helpful to identify the type of error as this will inform the type of action required to reduce the likelihood of the same error occurring again. Not all remedial actions will address all types of error.

A model for understanding human error is to categorise the type of error into slips, lapses or mistakes.

Slips – when a process and its implementation are familiar, but there is a performance failure (e.g. pressing the wrong button or reading the wrong gauge – error of commission).

Lapses – a lapse of attention or memory (e.g. forgetting to carry out a step in a procedure – error of omission).

Mistakes – a mistake can be either of the following:

- rule-based, for example, a rule that is incorrectly applied to the current situation because it is similar to another situation that the rule applies to

- knowledge-based, for example, a solution to an issue is devised based on knowledge, experience and a ‘mental model’ of how the system works.

The problem with knowledge-based mistakes is that people are prone to numerous forms of bias in situations of uncertainty. They make assumptions, settle for the first diagnosis they come up with, reject information that doesn’t support that diagnosis, etc. and then take action based on that. These biases can prevent a person making a fully informed decision. They can complicate the situation and make the problem more difficult to diagnose when the action fails to deliver the expected result, or leads straight to system failure

Management

Error risk management refers to preventing the likelihood of an error occurring in the first place. In order to invest in error risk management, it is essential for all safety-critical tasks that rely on human interaction to be identified. The role of people in initiating, preventing, controlling and mitigating the consequences of hazards associated with these tasks then needs to be integrated into the risk management process.

Management should be aware of the ways that human error can occur – especially in safety critical tasks – and recognise the measures necessary to prevent them, ensure early detection when an error is made and mitigate the consequences, should something go wrong. Specialist help may be needed to provide guidance on the key issues of human reliability; however, the primary means of improvement are using good hazard assessment and risk control practices.

Types

The issue with violations, or non-compliances, is that they involve making a judgement about the risk involved. If this judgement is incorrect, the results can be disastrous. There are four types of violations:

- routine – the act of non-compliance is an accepted practice

- situational – it is not possible to get the job done by strictly following the rules

- exceptional – deviation from rules under unusual circumstances

- reckless – breaking rules despite known dangers to self and others.

Management

To manage violations, it is necessary to understand why they occurred without making any assumptions. For the most part, human behaviour is motivated by the perceived or actual consequences that the behaviour is forecast to bring. This means that in order to understand a behaviour, the perceived and actual consequences need to be identified. This can be done through an Antecedent Behaviour Consequence (ABC) analysis.

Human behaviour

People demonstrate three different types of behaviour when carrying out tasks, depending on the level of conscious effort applied. These are:

- skill-based – simple and routine, often repeated, tasks

- rule-based – apply rules to complete a task

- knowledge-based – apply significant conscious effort when the rules no longer apply.

Understanding the types of behaviour required to carry out tasks enables the identification of the types of error that can occur within those tasks.

These are tasks that are simple and routine and have been carried out many times before. Attention required for the task is minimal, with the person effectively running on autopilot. Although basic progress checks are performed from time to time, these checks are largely subconscious.

Problems occur when the attention for checking is diverted. An example of skill-based behaviour is driving a car. When you are driving a car, the majority of conscious attention is taken up with dynamic risk assessment; e.g. assessing changes in the road ahead and monitoring movements of other traffic. The actual skill-based behaviours to keep the car moving are largely subconscious for an experienced driver; e.g. coordinating the engagement of the clutch with a manual change of gear and using the steering wheel.

Rules are applied to tasks to either help work through a problem or to identify the correct action to take. The rules may be stored in our memory or in the form of written instructions. For example:

- for a rule-based diagnosis task, apply ‘if this, then that’; e.g. if power is reaching the lamp, but there is no light, then the bulb is faulty.

- for a rule-based action task, apply ‘if this, then do this’; e.g. if the temperature in tank A has reached 75°C, then switch steam heating to half power.

Problems occur when part of the rule is neglected, the wrong rule is applied or a step in a written instruction is missed.

Knowledge-based behaviour is usually applicable in problem-solving or troubleshooting tasks and demands significant conscious effort when our rules no longer apply. This is when a solution to an issue is devised based on knowledge, experience and a ‘mental model’ of how the system works.

Example: A fuel truck driver filling a compartment at a depot expects to load 5000 litres of fuel. At 2500 litres, the fuel loading stops and the driver realises that the overfill protection system has activated. Their immediate thoughts are that, maybe, the compartment was partially full when they began filling; however, they had checked the sight glass beforehand which indicated it was empty, so possibly the overfill probe could be faulty. Another possibility is that the filling pump has failed. Without making further checks they do not know which of these diagnoses, if any, is correct.

The problem is that they may take action, such as overriding the overfill protection system, without carrying out any further checking.

Human factors in incident investigation

An incident investigation gathers and organises information that can be used to identify the human and organisational factors that contributed to the incident, and therefore inform recommendations for improvement.

The key to effective investigations is to ensure that the approach used discovers the underlying reasons why an incident occurred, not just the error made by the last person involved. Effective incident investigation is promoted by a ‘no blame’ culture – a culture that encourages incident reporting, and does not automatically assign blame to the person directly involved in the incident.

Human error in incidents

Human error by itself has little meaning when cited as the cause of an incident. Some incident analyses cite human error as the cause of the incident, and then go further and identify that the human error was due to a lack of training. A typical remedial action is to retrain the person involved in the incident. However, in terms of human and organisational factors, it is not sufficient to note ‘limited’ or ‘lack of training’ as a root cause. The analysis should find out why the person involved lacked training, what led to an untrained person being involved in the task and what system within the organisation failed.

Factors that promote effective incident investigation include:

- a system allowing any worker (including contractors) to formally report an incident

- clear guidance on how initial reports are to be made and the information required in those reports

- the option to report anonymously

- rules for determining whether or not to investigate a reported incident, and the required speed of response (based on the actual or potential severity of the incident)

- resources to conduct an investigation, with external support available as needed.

Resources

|

This webpage by the UK Health and Safety Executive (HSE) provides guidance and resources on human factors considerations for accident investigations. |

|

|

Human and organisational factors – A regulator’s perspective |

This presentation discusses human and organisational factors, including managing human reliability, from the perspective of the NSW resources regulator. |

|

This document by the UK HSE outlines the different types of human failures, their defining characteristics and examples of control measures for each category of failure. |